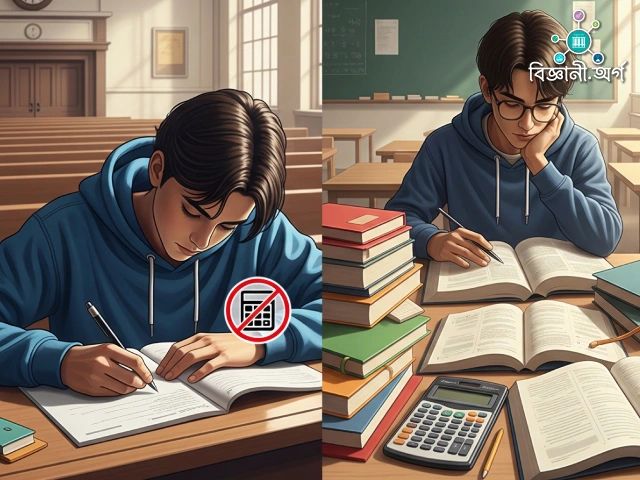

There was a time when strict rules stood guard at the entrance of exam halls. If you were found with even a tiny calculator in your pocket, it would be immediately confiscated. The belief was that only by solving complex mathematical problems by hand could one truly demonstrate real skill. But times have changed. Today, the use of calculators is permitted in many exams. Examiners now realize that proficiency isn’t about performing lengthy, mechanical calculations, but about applying the correct methods and reasoning. By letting machines bear the mechanical burden of calculation, students can apply their intellect where it matters most.

Another major shift in examinations has come in the form of open-book tests. Once, bringing books or notes into the exam hall was considered a ‘crime’. But now, many universities and professional exams allow candidates to bring reference materials. The logic is clear—true skill lies not in rote memorization, but in the ability to find, utilize, and apply relevant information to solve problems. This mirrors the workplace, where engineers, doctors, lawyers, and researchers regularly use books, databases, and online resources.

Rapid technological progress has now brought us to a new threshold. Just like calculators, artificial intelligence tools—such as ChatGPT and others—may soon transform how we learn and assess knowledge. Some may see this as ‘excessive assistance’, but consider this: when tasks like information retrieval, language translation, data analysis, or writing can be accomplished instantly, the true purpose of exams should be to evaluate one’s skill in using these tools to solve problems.

We can already see practical examples of this shift in workplace recruitment. Recently, Meta introduced a “Vibe Coding” method in their programmer recruitment process. Candidates are permitted to use artificial intelligence tools—such as ChatGPT or GitHub Copilot—during the test. The rationale is that, since engineers routinely use such tools on the job, the test should reflect that real-world experience. CEO Mark Zuckerberg has even stated that, in the future, most of Meta’s code will be written by AI.

This approach is not without controversy. Supporters argue that the main test is how skillfully one can leverage AI. Critics, on the other hand, warn that we may produce a generation unable to correct AI’s mistakes. In programming circles, this trend is often called Vibe Coding—where programmers rely solely on AI suggestions without deepening their own understanding.

Imagine if this same concept were applied to legal professions, where junior lawyers use AI assistants like “Harvey” to analyze complex cases. The real question would then be—can you use AI effectively to arrive at relevant and accurate answers, or are you simply going along with the ‘vibe’?

The use of AI in education and assessment seems inevitable. However, it brings new challenges—questions must be designed so that direct answers aren’t readily available; instead, students must evaluate AI outputs, edit them appropriately, and construct solutions with their own reasoning.

Throughout the history of education, every technological shift was first met with skepticism, then gradually accepted. Calculators, once considered ‘cheating’, have become indispensable. In the future, perhaps ChatGPT or similar AI tools will sit beside examinees in the exam hall—just like today’s pencil boxes or calculators. The sole purpose of exams then will be to gauge how adeptly you can use technology to complement your own knowledge.

The question is no longer whether AI should be allowed in exams. Rather, it is: Are we ready for an educational and assessment system where humans and machines work together, and where true expertise lies in mastering that synergy?

affordablecarsales.co.nz

Leave a comment