We’ve seen AI at work on city streets, inside homes, and in office rooms. Cameras monitor traffic, perform face recognition, and identify products in stores—all this is now familiar. But underwater? There are no people, and even our own eyes don’t function normally down there. That’s exactly why teaching computers to “see” the underwater world is such a huge challenge for computer vision.

Think about taking a photo underwater. It feels a lot like trying to see something far away while standing in the fog. Light fades quickly, colors change, scenes look hazy due to water and particles, and sometimes refraction distorts the actual shape of objects. So something that’s easy above water—telling apart a fish from a seaweed—suddenly becomes difficult underwater. If the image quality drops, even human eyes can make mistakes, let alone machines that have learned using only clear images—they could make even more errors. Dr. Alimur Reza points out that the problem isn’t just technological; it’s also environmental. Where people don’t usually go, there’s less data, less experience, and less practice building systems.

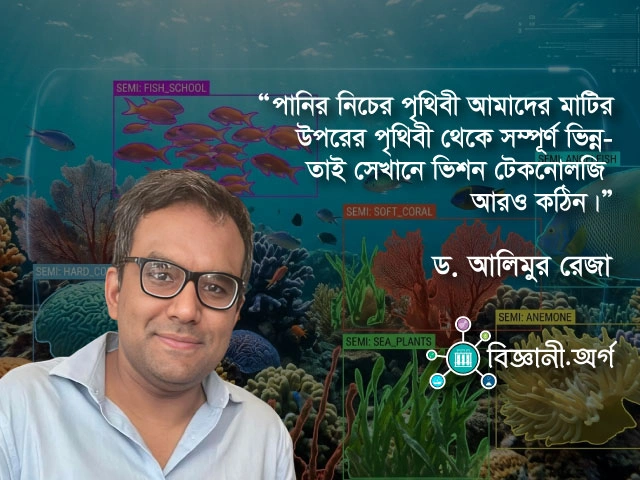

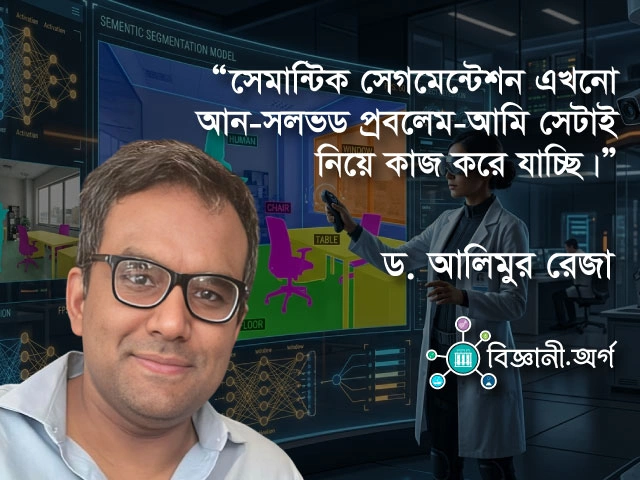

This is where semantic segmentation comes in, the main focus of Dr. Alimur Reza’s research. Semantic segmentation means dividing a photo or video into meaningful parts and labeling each part—this is a fish, that’s a rock, that’s coral, that’s seaweed. You could call it drawing an intelligent map. But mapping underwater is even harder, because the same fish can look like a different color depending on the light, wave motion makes edges waver, and objects blend together so much that separating them becomes difficult. Despite these complexities, researchers aim to create models that can work reliably on real underwater videos.

Dr. Alimur Reza shares that in their recent research, they’ve developed a semantic segmentation model for underwater environments that can handle a greater number of object categories. The main idea is simple: just as we use technology on land for security or health monitoring, AI can also be used to observe underwater life and environments. This is especially important for monitoring fish health or managing aquaculture. In Nordic countries, where large-scale fishing and aquaculture take place, AI-based monitoring can be crucial for quickly detecting fish diseases, behavioral changes, or issues in the water environment.

This is even more relevant for Bangladesh. With our river-dependent lifestyle, coastal regions, and rich fisheries resources, our connection to water runs deep. Imagine a future where cameras or sensors in fish farms use AI to detect abnormal fish behavior, or identify pollution or changes underwater early on—it could help reduce losses. If this becomes reality one day, a direct link can be made between technology and sustainable development goals (SDGs) in agriculture and fisheries, such as food security, biodiversity conservation, and the sustainable use of aquatic resources.

But the main lesson from Dr. Alimur Reza is that technology advances by following our questions. In the environments we navigate every day, technology develops quickly; in worlds beyond our immediate sight, progress is slow. But the future won’t remain confined to our homes—the vast, unseen worlds of oceans, rivers, and deep waters will one day be mapped with data, vision, and intelligence. And among those working toward this future is Dr. Alimur Reza—who reminds us that science isn’t just about making the familiar world faster; it’s also about building our ability to understand the unknown.

In the full interview, Dr. Alimur Reza talks in more detail about his educational journey, research insights, the future of robotics, and practical questions around AI usage. Read and watch Dr. Alimur Reza’s full interview below.

Leave a comment